Communicating Design Intent Using Drawing and Text

Abstract

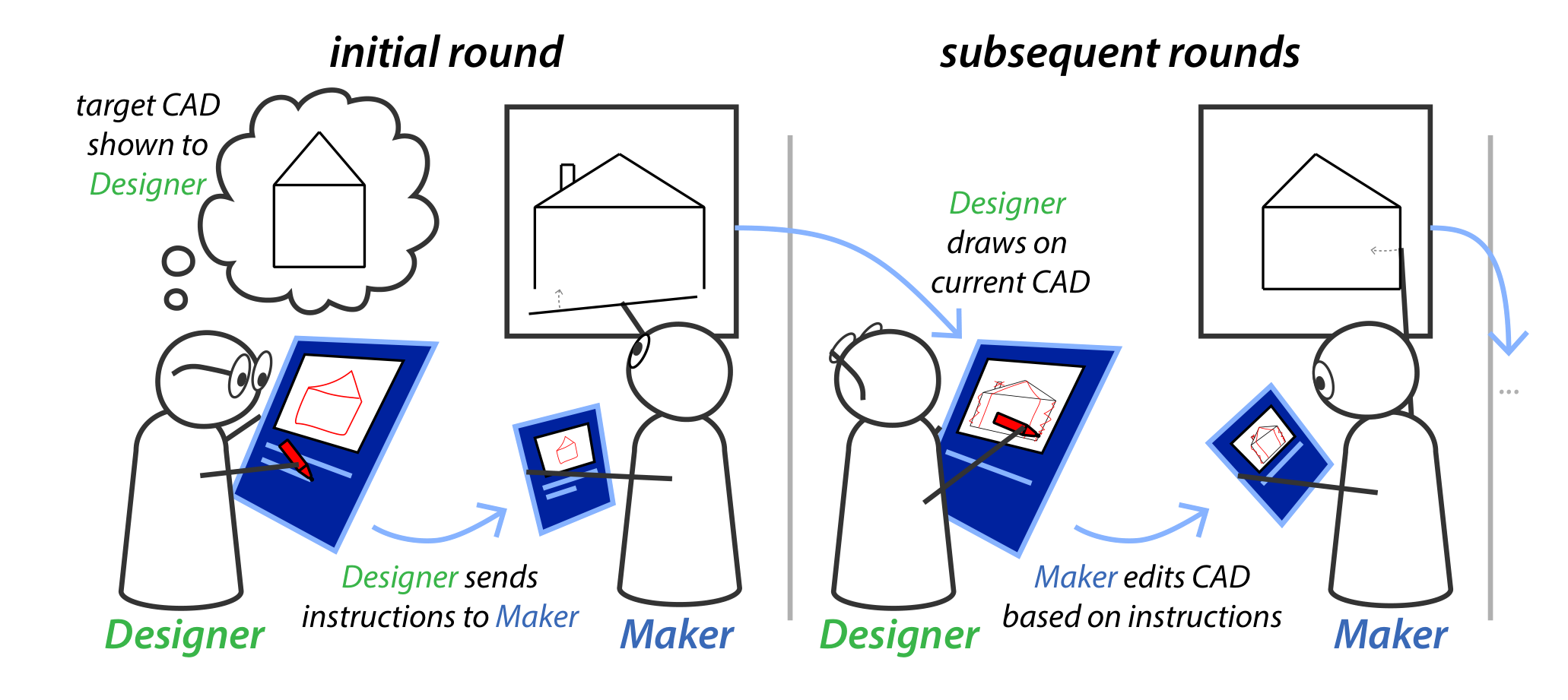

Realizing a designer’s intent in software currently requires tedious manipulation of geometric primitives, such as points and curves. By contrast, designers routinely communicate more abstract design goals to one another using an efficient combination of natural language and drawings. What would it take to develop artificial systems that understand how humans naturally convey design intent, and thereby enable more seamless interactions between humans and machines throughout the design process? First, it is vital to establish benchmarks that showcase the full range of strategies that humans use to successfully communicate about design intent. Here we take initial steps towards that goal by conducting an online study in which pairs of human participants – a “Designer” and “Maker” – collaborated over multiple turns to recreate target designs. In each turn, Designers sent messages containing language, drawings, or both to the Maker, describing how to modify an existing design toward the target. We found a preference for communicating using drawings in early turns and observed several multimodal strategies for conveying design intent. By comparing how human Makers and GPT-4V carried out instructions, we identify a gap in human and machine understanding of multimodal instructions and suggest a path for bridging this gap.

BibTeX

@inproceedings{10.1145/3635636.3664261,

author = {McCarthy, William P. and Matejka, Justin and Willis, Karl D.D. and Fan, Judith E. and Pu, Yewen},

title = {Communicating Design Intent Using Drawing and Text},

year = {2024},

isbn = {9798400704857},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

url = {https://doi.org/10.1145/3635636.3664261},

doi = {10.1145/3635636.3664261},

abstract = {Realizing a designer’s intent in software currently requires tedious manipulation of geometric primitives, such as points and curves. By contrast, designers routinely communicate more abstract design goals to one another using an efficient combination of natural language and drawings. What would it take to develop artificial systems that understand how humans naturally convey design intent, and thereby enable more seamless interactions between humans and machines throughout the design process? First, it is vital to establish benchmarks that showcase the full range of strategies that humans use to successfully communicate about design intent. Here we take initial steps towards that goal by conducting an online study in which pairs of human participants – a “Designer” and “Maker” – collaborated over multiple turns to recreate target designs. In each turn, Designers sent messages containing language, drawings, or both to the Maker, describing how to modify an existing design toward the target. We found a preference for communicating using drawings in early turns and observed several multimodal strategies for conveying design intent. By comparing how human Makers and GPT-4V carried out instructions, we identify a gap in human and machine understanding of multimodal instructions and suggest a path for bridging this gap.},

booktitle = {Proceedings of the 16th Conference on Creativity \& Cognition},

pages = {512–519},

numpages = {8},

location = {Chicago, IL, USA},

series = {C&C '24},

}